Translated articles in the CrossRef database

CrossRef is an organization of scientific publishers that manages the Digital Object Identifiers given to scientific articles. As a consequence they have a large database with scientific works, some of which are or have translations. The CrossRef database has an API from which we can request the records of works that are (filter=relation.type:is-translation-of) or have (filter=relation.type:has-translation) translations. The code we used to download and analyse the data to get the results below can be found on our Codeberg GIT repository.

Results

CrossRef currently knows 4142 translations, while only 2152 works have a translation. This makes sense as translated articles are often translated into many languages, although it could have been different as translations quite commonly do not have a DOI, but are only linked to with a URL, and thus do not have an entry themselves in the CrossRef database.

Publishers that publish translations tend to translate many. On average a publisher that publishes translations publishes 72 translations and only seven publishers have more than 100 translations. Similarly an average journal that publishes translations publishes 36 translations.

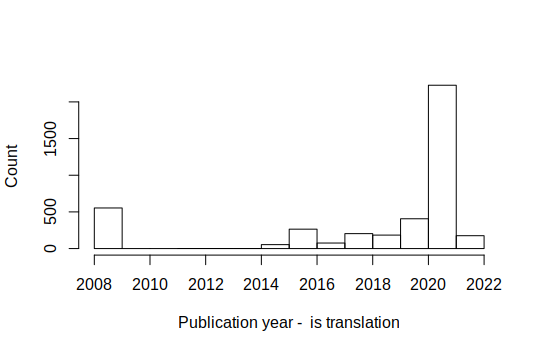

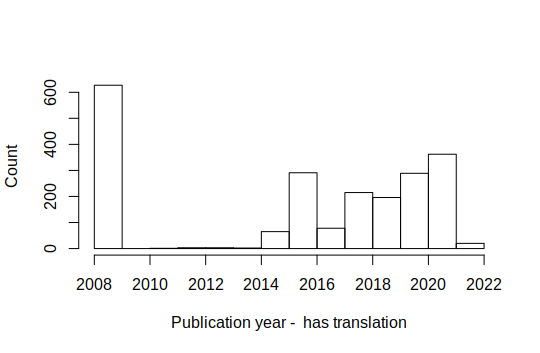

Translations by year

The graph with the number of translations published per year has some suspicious peaks at both ends, but this seems to be right. The peak in 2021 is due to a large number of articles entered into the record by Pleiades Publishing, which specializes in making translations. While the peak in the first year, 2008, is due to an anatomical atlas which was published in 9 languages.

Also the peak in the first year of the graph for works that have translations is due to the anatomical atlas.

Where are the translations?

Many works that have a translation point to a URI (generally a URL), not a DOI. So further information on these translations is not in the CrossRef database. Especially when you look at the number of translations (rather than the number of translated works) URIs are common. Works pointing to an URI tend to have multiple translations. Information on these translations (language, title, translator, ...) is not available and will have to be scrapped.

The works that have a translation point to a DOI 1466 times and to a URL 750 times (when they pointed to both, I have assumed these were double and only counted the DOIs). The 1466 works pointing to a DOI link to 2311 translations with a DOI, while the 750 works that point to a URL link to 5987 translations. This is to a large part due to the anatomical atlas, which is available in nine languages.

Articles with are in the CrossRef database as a translation, do not often have information on translations. So publishers mostly do not see the "original" as a translation of the translation. Or put in another way the publishers do not see all language versions as equal, but tend to see one as original and all other versions as translation.

Languages

Next to the URL-translations, also about half of the CrossRef database entries of article that are or have translations do not have information on the language. Furthermore, in quick eyeball estimation, a few percent of cases the language in the database does not match the language of the title. It is quite common that the language is encoded in the URL or even in the DOI. This is not done in a standard way, although ISO language codes are generally used and the code is often an extension (DOI) or a subdirectory (URL).

Of the translation for which we have information 2461 are in English, 154 in Norwegian, 44 in Spanish, 43 in Portuguese, 8 in Russian and 7 in Italian. The works that have translations are in Russian 279 times and in English 121, in Norwegian 114, in Portuguese 8 and in Italian 5 times.

Discussion

Likely many journals also have translations for other/earlier years. As it is a limited set of publishers and journals, we could contact them. For example, the publisher Pleiades, which put so many articles into the database in 2021, has existed since 1971 and may well have a large back catalogue.

As information on the language is often missing, we would need a method to estimate this from the title (and if possible abstract). There are many tools to estimate the language of a text. The shorter the text (a title) the higher the error rate. It would be great to have a method that indicates how accurate the estimate is, to make it easier to combine it with other methods to guess the language (such as the DOI, URL or location of the publisher), as well as to highlight the difficult cases for manual checks.